How to Run an AI SDR Pilot Program That Actually Works

Most AI SDR pilots fail before they start. Teams spin up a tool, fire off a few sequences, and judge the results on open rates — missing the deeper question: did the AI actually move buyers forward, or just generate noise?

A well-designed AI SDR pilot answers a specific business question with measurable evidence. It runs for 30–60 days, covers a defined ICP segment, and tracks outcomes beyond vanity metrics. Tools like Apollo's AI Sales Assistant make it easier to design and execute a pilot end-to-end — from ICP list building and account research to sequence creation and outreach automation — all from natural-language instructions. Here's exactly what a credible AI SDR pilot looks like in 2026.

Apollo Turns Hours of Research Into Minutes

Tired of your reps burning half their day verifying contact info instead of selling? Apollo delivers 230M+ accurate business contacts instantly. Start building pipeline faster — not bigger to-do lists.

Start Free with Apollo →Key Takeaways

- A structured AI SDR pilot runs 30–60 days, tests a single ICP segment, and measures pipeline outcomes — not just open rates.

- Buyer behavior has shifted: relevance and message consistency are now measurable deal-killers that your pilot must explicitly guard against.

- The best pilots phase autonomy — human review first, then expand automation only after quality thresholds are met.

- SDRs and RevOps leaders gain the most from pilots that consolidate prospecting, sequencing, and enrichment in one unified platform.

- Governance controls (approved message libraries, pre-send review, deliverability readiness) are no longer optional — they're table stakes.

What Is an AI SDR Pilot Program?

An AI SDR pilot is a time-boxed, controlled test of an AI-powered outreach tool against a specific prospect segment, designed to measure pipeline impact and operational lift. It is not a full deployment, a proof of concept built on cherry-picked data, or a generic "try the tool for a month" exercise.

The goal is a decision-quality answer: does this tool generate qualified meetings at an acceptable cost and quality bar?

Research from professional networks found that 56% of sales professionals now use AI daily, and those users are twice as likely to exceed quota. That adoption gap makes a structured pilot the fastest way to assess whether your team will be in the leading or lagging half.

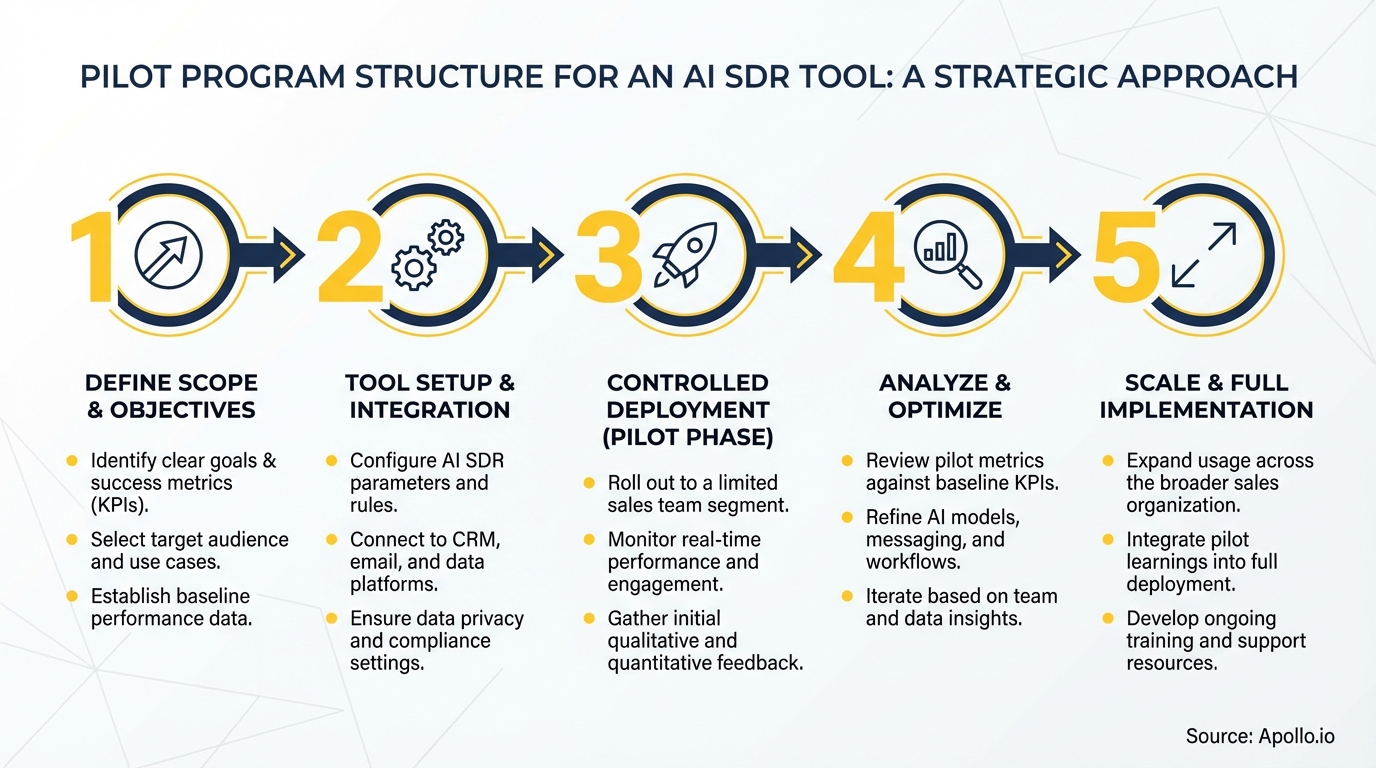

What Does the Pilot Structure Look Like?

A credible AI SDR pilot has four phases: readiness, co-pilot, measurement, and scale decision.

| Phase | Timeframe | Key Activities | Exit Criteria |

|---|---|---|---|

| 1. Readiness | Week 1 | Domain auth (SPF/DKIM/DMARC), list verification, ICP definition, baseline metrics, message library approval | Inbox placement confirmed; ICP segment locked |

| 2. Co-pilot | Weeks 2–3 | AI drafts messages; humans review and approve before send; A/B test 2–3 variants per segment | Brand/compliance pass rate meets threshold (e.g., 95%+) |

| 3. Measured Autonomy | Weeks 4–6 | Expand automation for approved message types; hold out 20% control group; track reply rate, meeting rate, SQL quality | Pilot cohort vs. holdout shows positive lift |

| 4. Scale Decision | End of Week 6–8 | Compile scorecard; present pipeline impact, cost-per-meeting, rep time saved, and governance incidents | Stakeholder go/no-go on full rollout |

Deliverability readiness in Phase 1 is non-negotiable. Pilot results are meaningless if email lands in spam. Domain authentication, list hygiene, and inbox placement monitoring must be confirmed before the first message sends. Explore sales intelligence tools that include built-in verification to reduce this setup burden.

What KPIs Should an AI SDR Pilot Track?

The right KPIs for an AI SDR pilot go beyond opens and clicks — they connect AI activity to downstream pipeline outcomes.

- Positive reply rate: Target segment vs. control holdout

- Meeting booked rate: Meetings per 100 contacts touched

- SQL conversion rate: Did AI-sourced meetings convert at parity with human-sourced?

- Message approval rate: % of AI drafts that passed human review without edits (governance signal)

- Verification burden: Time reps spend reviewing AI output per approved message (tracks whether AI adds workflow strain)

- Cost per meeting: Total pilot cost divided by meetings booked

Tracking verification burden matters because governance is a real adoption barrier. MarketsandMarkets reports AI SDR tools can free up meaningful SDR time for high-value activities — but only when the tool's output quality is high enough that review doesn't eat those gains. A pilot that saves prospecting time while adding hours of review has not demonstrated ROI.

Spending hours building prospect lists manually before your pilot even starts? Search Apollo's 230M+ contacts with 65+ filters to define and lock your ICP segment in minutes.

How Do SDRs and RevOps Leaders Run the Pilot Day-to-Day?

SDRs and RevOps leaders play distinct roles in a well-run AI SDR pilot, and conflating them is a common failure mode.

SDRs are responsible for message quality review during the co-pilot phase, flagging AI outputs that miss tone, personalization, or accuracy. Their feedback directly improves the approved message library. Apollo's AI Assistant lets SDRs interact with the tool conversationally — refining prompts, editing sequences, and reviewing AI-drafted emails without switching between tools. As Erik Fernando Nieto, BDR at JumpCloud, put it: "Apollo's AI Assistant filters and cleans prospect data for me, so I can find the right people faster and run better searches. It saves me about an hour per prospecting session."

RevOps leaders own the measurement infrastructure: CRM tagging for pilot vs. control contacts, attribution rules, and the weekly scorecard. They also set the autonomy thresholds — defining what pass rate on message review unlocks the next level of automation. For more on connecting AI pilots to performance frameworks, see sales performance management strategy.

Turn Funnel Guesswork Into Pipeline Wins

Tired of watching marketing leads stall before they ever reach your pipeline? Apollo surfaces high-intent prospects and arms your team with the intelligence to convert faster. 600K+ companies trust Apollo to build pipeline that actually closes.

Schedule a Demo →What Governance Controls Does an AI SDR Pilot Need?

Governance in an AI SDR pilot means defining what the AI can and cannot do before it touches a prospect.

- Approved claims library: A pre-vetted list of product claims, proof points, and value props the AI can reference. No claims outside this library.

- Pre-send human review: Required during co-pilot phase; optional for approved message types in Phase 3.

- Consistency checks: Verify AI-generated messages align with your website and marketing content. Gartner research found 69% of B2B buyers report inconsistencies between seller messages and company websites — a direct trust and conversion risk.

- Incident log: Track any message sent outside guidelines, any deliverability incident, and any buyer complaint. Review weekly.

- Data boundaries: Define which contact records the AI can access and enrich. Confirm CRM permissions before launch.

The shift toward more formal governance in revenue tech rollouts reflects a broader industry pattern: agentic AI systems require permissioning and auditability built in from day one, not bolted on after problems surface. For a deeper look at how AI is changing B2B outreach workflows, see AI writing tools for sales: real results, real personalization.

Apollo's AI Content Center addresses consistency governance directly — you configure your value proposition, pain points, and approved messaging context once, and every AI-generated email, call script, and follow-up is grounded in that approved framework.

What Does a Pilot Scorecard Look Like?

A pilot scorecard is the single document that drives the scale decision at the end of the pilot. It should be one page, stakeholder-ready, and answer three questions: Did the AI generate pipeline?

At what quality? At what operational cost?

| Metric | Pilot Cohort | Control Group | Lift |

|---|---|---|---|

| Positive reply rate | [Record result] | [Record baseline] | [Calculate delta] |

| Meeting booked rate | [Record result] | [Record baseline] | [Calculate delta] |

| SQL conversion rate | [Record result] | [Record baseline] | [Calculate delta] |

| Message approval rate | [Record result] | N/A | Target: 95%+ |

| Avg. review time per message | [Record result] | N/A | Should trend down |

| Cost per meeting booked | [Record result] | [Record baseline] | [Calculate delta] |

| Governance incidents | [Count] | N/A | Target: 0 |

A data point worth benchmarking against: Bain & Company found sellers spend only 25% of their time actively selling, with AI capable of doubling that selling time by automating administrative work. Your scorecard's "review time per message" metric directly tests whether your pilot is achieving that shift or just moving the manual work around.

How Do You Run a Successful AI SDR Pilot With Apollo?

Apollo consolidates the core pilot infrastructure into one platform: contact data and enrichment, ICP scoring, multi-channel sequences, AI-generated messaging, and analytics — eliminating the need to stitch together separate tools for each pilot component.

For SDR teams, the Outbound Copilot automates ICP-matched list building and sequence enrollment with manual or automatic approval controls — exactly the co-pilot-to-auto-pilot structure a pilot requires. The ICP definition framework helps teams define the right pilot segment before launch. For sales leaders evaluating AI's role in broader sales transformation, Apollo's unified platform means pilot learnings carry directly into full deployment without re-integration work.

As Tory Kindlick, Head of Revenue Ops at RapidSOS, described: "Work that would've taken me hours was done before I even got off the train." That's the standard a well-configured pilot should demonstrate.

Spending too much time on manual outreach while trying to run a controlled pilot? Automate your sequences with Apollo's multi-channel engagement platform and keep your pilot infrastructure in one place.

What Should You Do After the Pilot Ends?

After the pilot, present the scorecard to leadership with a clear recommendation: scale, iterate, or stop. A positive result on meeting rate and SQL quality with acceptable governance burden is a green light for full rollout.

A mixed result — pipeline lift but high review burden — points to a content center configuration problem, not a tool failure. A negative result on both means the ICP segment, message approach, or tool fit needs to be reconsidered before further investment.

The pilot's approved message library, governance log, and A/B test results are assets regardless of outcome. They inform your next outbound strategy even if the specific tool doesn't scale. Use lead generation tool evaluations and B2B marketing tool benchmarks to contextualize your results against the broader market before making a final call.

A structured AI SDR pilot is one of the highest-leverage investments a GTM team can make in 2026. It produces decision-quality data, builds internal confidence, and creates the governance foundation for responsible AI-powered outreach at scale. Start free with Apollo and run your first AI SDR pilot with the data, sequencing, and AI tools already built in.

Prove Pipeline ROI With Apollo

ROI pressure killing your tool budget? Apollo delivers measurable pipeline impact — 46% more meetings with AI, so your next budget conversation comes with receipts, not guesses.

Schedule a Demo →Don't miss these

Sales

Inbound vs Outbound Marketing: Which Strategy Wins?

Sales

What Is a Sales Funnel? The Non-Linear Revenue Framework for 2026

Sales

What Is a Go-to-Market Strategy? The 2026 GTM Playbook

See Apollo in action

We'd love to show how Apollo can help you sell better.

By submitting this form, you will receive information, tips, and promotions from Apollo. To learn more, see our Privacy Statement.

4.7/5 based on 9,015 reviews