How Do I Measure the Performance of an AI SDR Compared to a Human SDR in 2026?

Most teams measuring AI SDR performance are grading it on the wrong exam. Volume metrics like emails sent or meetings booked tell you what happened at the top of the funnel — not whether it created revenue. With Gartner dedicating a session to AI SDR agents at its 2026 CSO & Sales Leader Conference, the question has shifted from "should we try AI SDRs?" to "how do we measure them fairly?" If you're building or refining your SDR strategy, understanding the SDR role end-to-end is the essential starting point. Tools like Apollo's AI Sales Assistant give revenue teams a practical lens here — it runs end-to-end outbound workflows so you can benchmark AI-assisted output against human baselines within a single platform.

Research Less, Close More With Apollo

Tired of your reps burning hours verifying contact info instead of selling? Apollo delivers 230M+ business contacts with 97% email accuracy so your team hits the phones faster. Start building pipeline today.

Start Free with Apollo →Key Takeaways

- Stop measuring AI SDRs on emails sent. Grade them on meeting-held rate, opportunity creation rate, and pipeline generated per outbound dollar.

- A fair comparison requires three cohorts: fully agentic AI SDR, AI-assisted human SDR, and no-AI human SDR — with matched ICP and offer.

- Volume advantages can mask worse downstream conversion. Always track AI-authored outreach share alongside reply rate and qualification rate.

- Deliverability health (bounce rate, spam complaint rate, inbox placement) is now a core performance variable, not an ops afterthought.

- The dominant model in 2026 is hybrid: AI handles research, enrichment, and speed-to-lead; humans handle nuanced objection handling and high-stakes personalization.

Why Is Measuring AI SDR vs. Human SDR Performance So Hard?

The core problem is that most comparisons aren't controlled. An AI SDR and a human SDR rarely receive the same ICP segment, the same offer, the same channel mix, or the same sending domain — making any head-to-head result misleading. According to Cirrus Insight, AI adoption among sales representatives nearly doubled from 24% in 2023 to 43% in 2024. That means many "human SDR" benchmarks already include AI assistance, which contaminates your control group. A defensible measurement framework requires three distinct cohorts:

- Cohort A: Fully agentic AI SDR (no human in the loop)

- Cohort B: AI-assisted human SDR (rep uses AI for research and drafting)

- Cohort C: No-AI human SDR (pure human baseline)

Match all three cohorts on territory, ICP firmographics, offer, and sending domain before drawing any conclusions. Learn more about how to use sales automation the right way to avoid common measurement pitfalls.

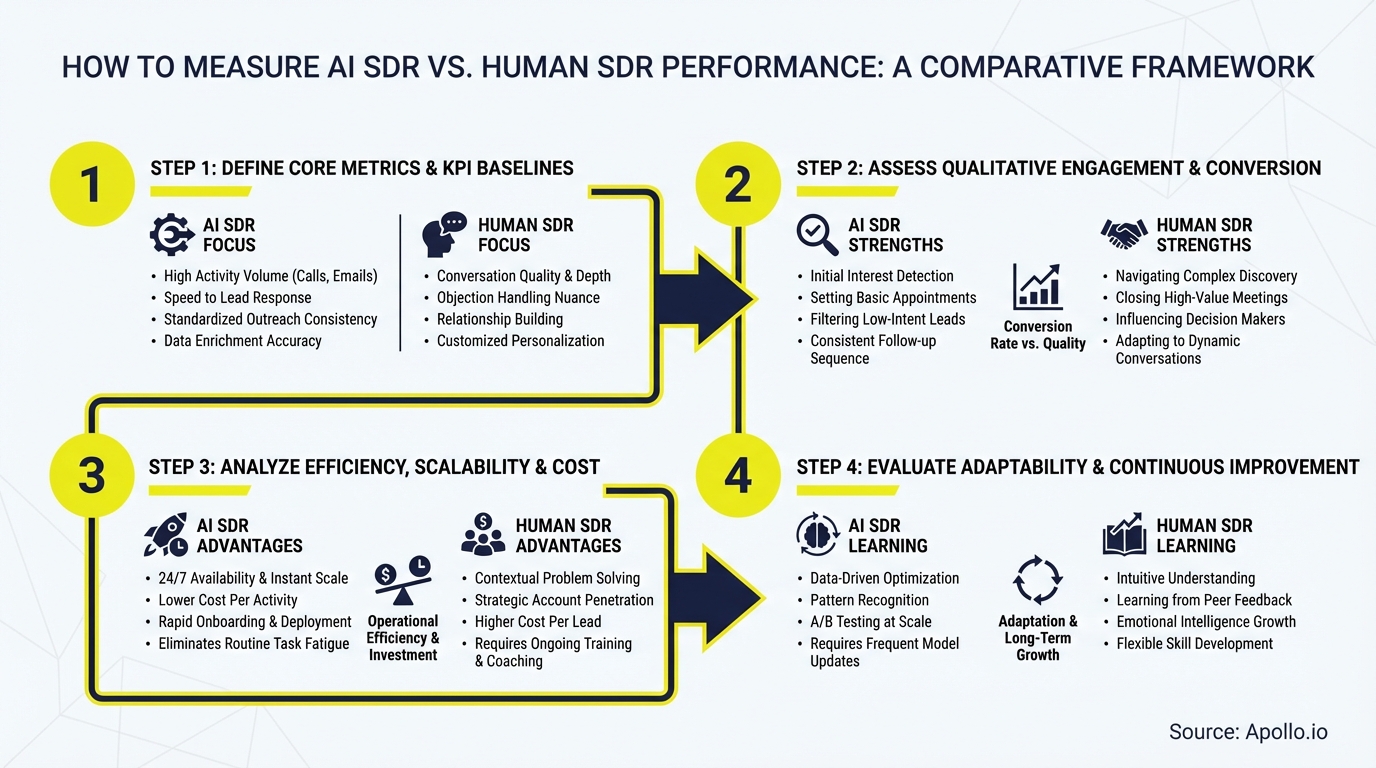

What KPIs Should RevOps Leaders Use to Compare AI and Human SDRs?

RevOps leaders should measure AI SDR performance on outcome metrics, not activity proxies. The industry is actively moving away from vanity metrics toward downstream revenue attribution.

Here is a practical KPI scorecard:

| Metric Category | KPI | Why It Matters |

|---|---|---|

| Speed | Time-to-first-touch | AI SDRs respond to lead inquiries within seconds; humans typically take minutes or hours — speed-to-lead affects conversion |

| Coverage | Contacts worked per day | Volume capacity differs significantly between AI and human SDRs |

| Quality | Positive reply rate by segment | High volume without positive replies signals list/offer problems |

| Qualification | Meeting-held rate / ICP match rate | Meetings booked mean nothing if they don't show or aren't qualified |

| Pipeline | Opportunity creation rate | The true output of SDR effort |

| Revenue | Pipeline $ per outbound dollar spent | Unit economics that CFOs and revenue leaders care about |

| Deliverability | Bounce rate / spam complaint rate | Inbox placement is gated by sender reputation — a hidden AI risk |

| Governance | Hallucination / error rate (QA sample) | AI messaging errors damage brand trust and deliverability |

Struggling to track pipeline attribution across cohorts? Apollo's pipeline tools give you a single view of SDR-sourced opportunities — so you can compare AI and human output in one workspace.

Turn Funnel Guesswork Into Pipeline Wins

Tired of watching marketing leads stall before they ever reach your pipeline? Apollo surfaces high-intent prospects and arms your team with the signals to act first. Nearly 100K paying customers stopped guessing and started closing.

Start Free with Apollo →How Do AI SDRs and Human SDRs Differ in Raw Output Capacity?

AI SDRs operate at a fundamentally different scale than human SDRs, which is why volume metrics alone are insufficient for evaluation. According to SaaStr, AI SDRs can send 3,221 emails per month compared to human SDRs sending 75–285 emails per month — an 11–40x volume difference. Research from MarketsandMarkets shows AI SDRs respond to lead inquiries within seconds or minutes, while human representatives typically take minutes or hours.

That volume advantage creates a measurement trap: more outreach can inflate top-of-funnel activity without improving qualified pipeline. The right question isn't "how many emails did the AI send?" — it's "what conversion rate did those emails produce, and at what cost per qualified meeting?" This is why sales performance management frameworks are evolving to emphasize downstream outcomes over activity counts.

How Should SDRs and BDRs Run a Fair 90-Day AI vs. Human Pilot?

SDRs and BDRs running a pilot comparison should control six variables that most teams miss: ICP segment, offer/messaging, channel mix, attribution window, sending domain/IP, and calendar hygiene. Without these controls, volume advantages mask conversion quality differences.

A practical 90-day pilot structure:

- Days 1–30 (Baseline): Establish human SDR benchmarks — contacts worked, reply rate, meetings held, opportunities created. Document domain health before AI sends a single email.

- Days 31–60 (Launch): Run AI SDR on a matched segment. Track deliverability metrics (bounce rate, complaint rate) in parallel with activity metrics. Use separate sending domains per cohort.

- Days 61–90 (Attribution): Measure opportunity creation rate, pipeline generated, and cost per qualified meeting across all three cohorts. QA sample AI-generated messages for accuracy and brand compliance.

For SDRs using Apollo, the Outbound Copilot automates ICP-matched prospecting and sequence enrollment with full credit transparency — making it straightforward to isolate AI-driven activity from human-driven activity in your attribution model. Pair this with a structured sales performance management strategy to build consistent reporting across cohorts.

What Governance and Quality Controls Should Apply to AI SDR Measurement?

Governance metrics belong in the core KPI stack, not in a compliance appendix. AI-generated outreach carries three distinct risks that pure activity measurement misses: hallucinated prospect details that damage credibility, brand compliance failures, and deliverability degradation from bulk-sender rule violations.

Sales leaders should QA-sample at least 10% of AI-generated messages per week and score them for factual accuracy, ICP relevance, and tone compliance.

Key governance KPIs to track alongside output metrics:

- Error/hallucination rate: Percentage of messages with inaccurate company or contact details

- Brand compliance rate: Messages passing tone and positioning review

- Inbox placement rate: Percentage of emails reaching the primary inbox vs. spam

- Spam complaint rate: Should remain below bulk-sender thresholds set by major inbox providers

Apollo's AI Content Center grounds all AI-generated messaging in your company's value proposition, pain points, and differentiators — reducing hallucination risk and keeping outputs on-brand by design. Spending too much time reviewing AI-generated messages manually? Apollo's AI sales automation keeps messaging grounded in real account context, reducing the QA burden on your team.

What Is the Right Measurement Model for 2026 and Beyond?

The right model for 2026 is not "AI vs. human" — it is "AI-augmented human vs. non-augmented human," with AI handling high-volume research and first-touch, and humans owning nuanced positioning, objection handling, and relationship-critical moments. Data from Salestools.io shows 96% of revenue leaders expect their teams to use AI tools by the end of 2026 — making the augmented human the new competitive baseline, not the exception.

The measurement framework evolves accordingly:

- Measure human-assisted lift: meetings per rep-hour with vs. without AI support

- Measure stage velocity: time from first touch to qualified opportunity, by cohort

- Measure unit economics: pipeline generated per dollar of total SDR spend (salary + tooling)

- Treat deliverability health as a first-class performance metric, not an infrastructure task

For sales leaders building toward this model, Apollo's guide to selling with AI covers how to structure your team's workflows for measurable, repeatable outbound performance.

Start Measuring What Actually Drives Revenue

Measuring AI SDR performance fairly requires controlled cohorts, downstream outcome metrics, and governance guardrails — not just a comparison of emails sent. The teams winning in 2026 are those treating measurement as a strategic capability, not an afterthought.

Apollo's unified platform puts prospecting, sequencing, AI research, and pipeline analytics in one workspace — so you can run clean comparisons without stitching together five separate tools. As one Apollo customer put it: "Having everything in one system was a game changer" — Cyera.

Start Your Free Trial and see how Apollo helps you benchmark AI and human SDR performance side by side.

Prove Pipeline ROI From Day One

ROI pressure killing your tool budget before it even starts? Apollo delivers measurable pipeline impact fast — 46% more meetings with AI Research Agent. Nearly 100K paying customers justified the spend. Yours will too.

Schedule a Demo →Don't miss these

Sales

Inbound vs Outbound Marketing: Which Strategy Wins?

Sales

What Is a Sales Funnel? The Non-Linear Revenue Framework for 2026

Sales

What Is a Go-to-Market Strategy? The 2026 GTM Playbook

See Apollo in action

We'd love to show how Apollo can help you sell better.

By submitting this form, you will receive information, tips, and promotions from Apollo. To learn more, see our Privacy Statement.

4.7/5 based on 9,015 reviews