How to Make AI-Generated Outreach Feel Personal, Not Spammy

AI-generated outreach sounds generic or spammy when the AI has no real context to work with. Feed it shallow inputs, and you get shallow outputs.

The fix is not less AI — it is better inputs: first-party account signals, verified contact data, and a clear value proposition grounded in your specific ICP.

Tools like Apollo's AI Sales Assistant are built around this principle. Instead of a generic chatbot, it runs end-to-end GTM workflows grounded in real account research, buyer signals, and your configured messaging context — so the output actually sounds like it came from a human who did their homework. Learn more in Use the AI Assistant to Sell Smarter.

Research Less, Close More With Apollo

Tired of your reps burning hours on manual lead research instead of selling? Apollo surfaces verified contacts instantly so your team spends time closing, not digging. Join 600K+ companies building pipeline faster.

Start Free with Apollo →Key Takeaways

- Generic AI outreach fails because the AI lacks account-specific context, not because AI itself is the problem.

- Grounding your prompts in first-party signals (job changes, funding, tech stack) is the single highest-leverage fix.

- SDRs and BDRs who use AI-driven personalization see meaningfully higher reply rates and more meetings booked.

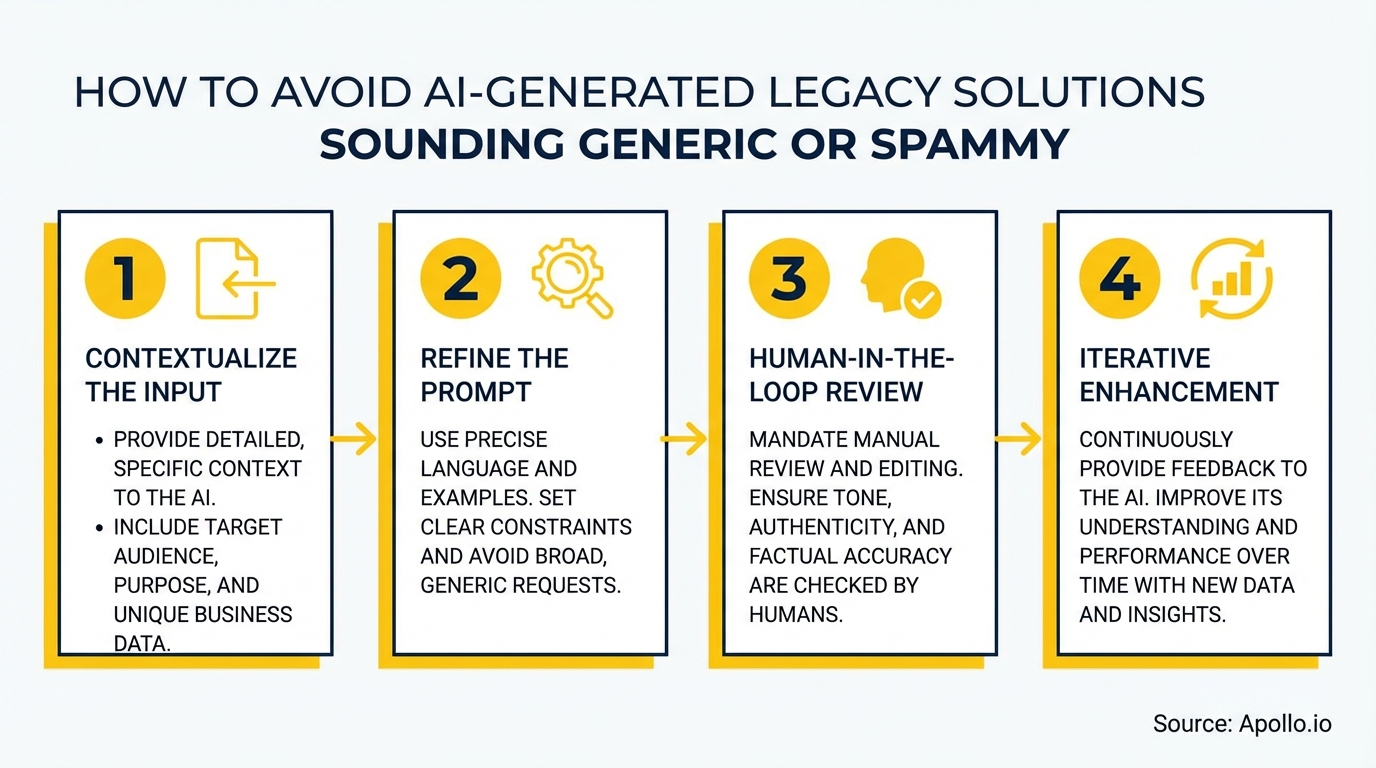

- A human-in-the-loop QA step — reviewing outputs before sending — protects deliverability and brand reputation.

- Measuring response quality and time-to-first-reply, not just open rates, reveals whether your outreach is actually resonating.

Why Does AI Outreach Sound Generic in the First Place?

AI outreach sounds generic when the model has no account-specific context and defaults to templated phrasing.

The root cause is almost always the prompt, not the model.

When reps paste a prospect's name and job title into a generic template prompt, the AI fills the remaining gaps with statistically common patterns — which sound exactly like every other AI email in a prospect's inbox.

According to InformaTech Target, generic AI-generated content is becoming ineffective, with B2B buyers growing weary of bland, repetitive material. Meanwhile, research from Martal shows 54% of marketers find it hard to differentiate their content from competitors due to the rise of AI-generated content. The bar for specificity has never been higher.

What Is the Specificity Ladder for AI Outreach?

The specificity ladder is a framework for ranking the quality of context you feed into an AI prompt, from weakest (name + title) to strongest (verified signals + first-party data). Higher rungs produce messages that prospects cannot dismiss as automated.

| Rung | Context Type | Example Input | Output Quality |

|---|---|---|---|

| 1 (Weakest) | Name + job title only | "Hi [Name], VP of Sales at [Company]" | Generic, easily spotted |

| 2 | Industry + company size | "Series B SaaS, 50 employees" | Slightly relevant, still templated |

| 3 | Firmographic + tech stack signal | "Uses Salesforce, recently hired 3 SDRs" | Contextual and targeted |

| 4 (Strongest) | Trigger event + pain point + your differentiator | "Just raised Series B, expanding into EMEA, no outbound motion yet" | Specific, hard to ignore |

Signals worth including at Rung 4: recent funding rounds, job postings, earnings call themes, tech stack changes, and intent data showing active research behavior.

How Do SDRs Use AI Personalization Without Sounding Spammy?

SDRs avoid spammy AI output by configuring their AI tool with a populated content center before generating any message. This means defining your value proposition, ICP pain points, and product differentiators once — then letting AI use that context every time it writes.

In Apollo, the AI Content Center serves this purpose: it grounds all AI-generated emails, call scripts, and follow-ups in your specific company context. Apollo's AI Research then layers in live account signals — job changes, funding, tech stack — as dynamic variables inside each message.

Data from Jeeva AI shows teams using AI-driven personalization report 2x higher reply rates and a 30% increase in booked meetings. The difference between those teams and the ones getting ignored is the quality of context they feed the AI.

Struggling to build targeted prospect lists before you even start writing? Search Apollo's 230M+ contacts with 65+ filters to find the right accounts with the right signals before your AI writes a single word.

What Does a Human-in-the-Loop QA Process Look Like?

A human-in-the-loop QA process means a rep or manager reviews AI-generated messages against a short checklist before they send. This is not about rewriting everything — it is about catching the three failure modes that kill deliverability and trust: false personalization (hollow compliments), overclaiming (unprovable superlatives), and tone mismatch (too formal or too casual for the segment).

A practical QA checklist for each message:

- Falsifiable detail test: Does the message reference at least one specific, verifiable fact about this account?

- One narrow claim: Is there a single, clear value claim rather than a list of features?

- Reply invitation: Does the message invite a response with a low-friction question?

- No AI tells: Remove phrases like "I hope this finds you well," "I wanted to reach out," and "leverage" when overused.

- Deliverability check: No spam-trigger words, proper authentication in place. See the full email deliverability guide.

For teams running high volume, Apollo's Outbound Copilot supports manual approval before adding new prospects to sequences — keeping a human decision point in the loop without slowing down the overall cadence.

Turn Funnel Guesswork Into Real Pipeline

Tired of watching marketing leads stall before they ever reach your pipeline? Apollo surfaces in-market buyers with verified contact data so your team stops chasing ghosts and starts closing. Nearly 100K paying customers trust Apollo to build real pipeline.

Start Free with Apollo →How Should AEs and Revenue Leaders Measure AI Outreach Quality?

Revenue leaders should track response quality metrics alongside volume metrics to know whether AI outreach is working. Open rates tell you if the subject line landed.

Everything after that — reply rate, sentiment of replies, time-to-first-reply, and spam complaint rate — tells you if the message was worth reading.

| Metric | What It Signals | Action Trigger |

|---|---|---|

| Reply rate | Message relevance | Below benchmark: review prompt inputs |

| Negative reply rate | Tone or targeting mismatch | Any spike: pause sequence, audit copy |

| Spam complaint rate | Deliverability risk | Above 0.1%: reduce volume, improve segmentation |

| Time-to-first-reply | Urgency and relevance of message | Trending up: test stronger trigger-based openers |

For AEs managing active accounts, Apollo's Conversation Intelligence surfaces objections and deal signals from calls — giving you real context to feed back into follow-up messaging so every touchpoint builds on the last.

Spending too much time stitching together research, writing, and sending across separate tools? Run your entire outreach motion in one place with Apollo's multi-channel engagement platform.

What Are the Most Common AI Outreach Mistakes to Avoid in 2026?

The most common mistakes are treating AI personalization as a token-swap exercise (replacing [First Name] with an AI-generated compliment) and scaling volume before validating quality. Both destroy sender reputation faster than they build pipeline.

- Shallow compliments: "I loved your recent post" with no specific reference reads as automated immediately.

- Feature lists instead of one claim: Prospects do not read past the third bullet. Make one point, make it specific.

- No reply logic: Sequences that blast and never adapt to non-replies or partial engagement feel like spam because they are.

- Ignoring email personalization best practices: Personalization without relevance is noise.

- Skipping deliverability hygiene: High-volume AI sends without proper authentication will damage your domain. Authentication and complaint rates now directly determine inbox placement.

Research from Salesforge shows AI-driven personalization produces an average 34% increase in open rates when done correctly — the operative phrase being "done correctly," which requires all of the above.

How Do You Get Started with Non-Generic AI Outreach?

Start by auditing your current AI inputs before changing any tools. If your prompts do not include a specific trigger event, a defined pain point, and your value proposition, fix that first.

Better prompts outperform better tools every time.

For teams ready to systematize this, Apollo consolidates prospecting, AI research, sequence building, and engagement into one platform. As Tory Kindlick, Head of Revenue Ops at RapidSOS, put it: "Work that would've taken me hours was done before I even got off the train." That speed comes from having account research, prospect data, and messaging all in the same workflow — not stitched across separate tools.

Explore the full outbound prospecting guide for a step-by-step approach, or review how to build a sales tech stack that scales without adding more point tools to manage.

The answer to generic AI outreach is not less AI. It is AI that is grounded in real signals, reviewed by a human, and measured on quality — not just volume.

Start there, and your outreach will stand out in any inbox.

Ready to make your AI outreach specific, contextual, and conversion-ready? Start Prospecting with Apollo for free and see what outreach looks like when the AI actually knows your buyer.

Prove Pipeline ROI Before Next Quarter

Budget approval stuck on unclear metrics? Apollo surfaces measurable pipeline impact so you walk into every review with hard numbers. Leadium 3x'd annual revenue — your ROI story starts here.

Start Free with Apollo →Don't miss these

Sales

Inbound vs Outbound Marketing: Which Strategy Wins?

Sales

What Is a Sales Funnel? The Non-Linear Revenue Framework for 2026

Sales

What Is a Go-to-Market Strategy? The 2026 GTM Playbook

See Apollo in action

We'd love to show how Apollo can help you sell better.

By submitting this form, you will receive information, tips, and promotions from Apollo. To learn more, see our Privacy Statement.

4.7/5 based on 9,015 reviews